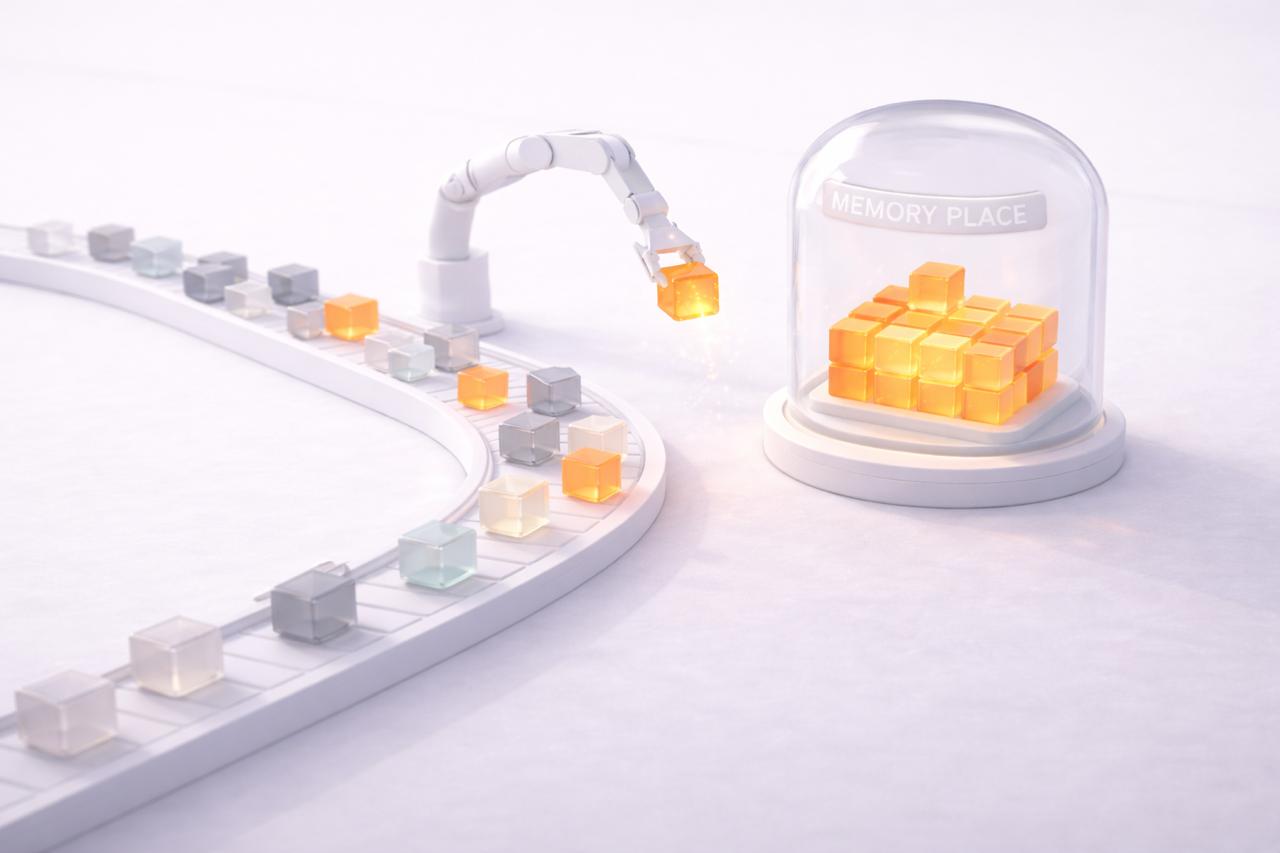

If you're building AI agents, you've probably run into the memory problem. The agent forgets context, contradicts itself, or retrieves the wrong information at the wrong time. This post breaks down what we've learned about managing memory in agentic systems. The types, the layers, and the common challenges.

The Three Types of Memory

Procedural memory is how the agent knows what to do. These are instructions, workflows, and learned routines. E.g. "When a user asks for a prior auth, pull the policy document first, then extract the relevant criteria." In practice, this lives in system prompts, skill files, or structured instruction sets that the agent follows at runtime.

Semantic memory is what the agent knows as facts. Patient preferences, org-specific terminology, drug formulary rules, provider credentials — stored knowledge the agent draws on regardless of what happened in the current session. This is typically persisted in a vector store or structured database and retrieved as needed.

Episodic memory is what happened. Conversation history, past interactions, prior decisions the agent made and their outcomes. E.g. "Last time we submitted a prior auth for this patient, it was denied because we missed the step therapy requirement." Episodic memory gives agents the ability to learn from experience.

Getting the balance right between these three types matters. Too much procedural memory and the agent becomes rigid. Too much episodic memory and retrieval gets noisy. Too little semantic memory and the agent keeps asking for things it should already know.

The Core Problem

LLMs are stateless. Agents need state. Every memory system is just a way to bridge that gap. When your agent forgets something it knew last week or starts contradicting itself, one might be inclined to blame the model capability. Most times, the model is fine. The memory pipeline is the likely culprit.

Memory Layers and Common Challenges

Working memory (the context window). This is what the model can see right now. The failure here often isn't forgetting, it's overloading info. Cram too much in and the model loses the ability to find what matters. Treating the context window like a small, expensive register has helped us.

Session state (recent history). This is everything from current and recent sessions that you haven't committed to long-term storage yet. Common failures include index goes stale, session logs grow forever without cleanup, and keyword search over natural language transcripts gives low quality results.

Long-term memory (durable knowledge). These are facts and instructions that have to live across agent restarts. A common challenge with long-term memory is reviewing and cleaning up older contradicting entries. Two facts that are individually true but jointly inconsistent could sit in memory and cause sub-optimal outputs.

Retrieval

Most systems use keyword search, vector search, or both. That might work okay, but there is a way to make it even better. Tagging memories with structured metadata at write time (entities, event type, importance, time scope) and using the metadata as a pre-filter before vector/hybrid search provides better results. Obviously more work upfront, but better precision at read time.

Compaction

Compaction helps remove redundant information and store the high value data. But over-compacting or generalizing with loss of crucial info will cause problems. E.g. When "Patient prefers morning appointments due to insulin schedule" becomes "Patient prefers morning appointments", it's an issue. Nuance matters.

The recommended path is to separate the two operations: Compaction (dedup, merge) should run frequently and cheaply. Generalization (inferring patterns from accumulated facts) should run less often and human review is not a bad idea. Never let the agent compact its own instructions in real-time.

Tooling Evolution

Tooling around agent memory today is still in early stages. I expect more tools to be built for addressing gaps today, like ensuring transactional consistency for writes, conflict detection at read time, meta data, structured forgetting (deletion vs. expiration vs. deprecation), and cross-agent consistency guarantees. Have to caveat that this could all change with the evolution of LLM models.

The Bottom Line

Better memory makes agents more capable. Every memory write is essentially a parameter update happening in production, at the pace of user interactions.

Have fun building AI apps!