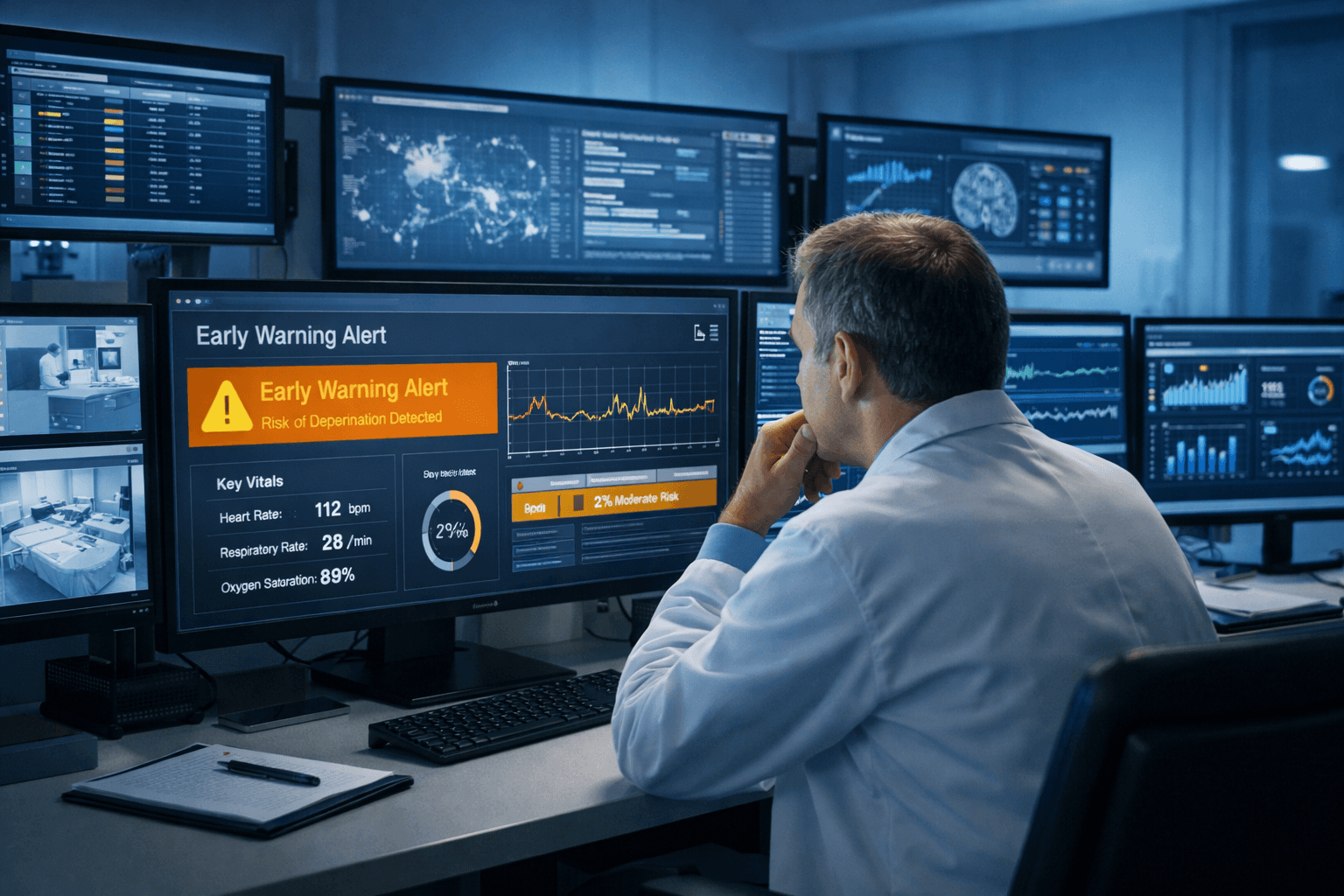

Healthcare AI doesn't fail all at once. It drifts, degrades, and creates risk in ways standard testing misses. We build evaluation systems that detect problems before they reach patients and demonstrate reliability to the stakeholders who need proof.

What Are AI Evaluations?

Evaluations are systematic processes that measure AI accuracy, clinical appropriateness, and compliance with requirements over time.

They answer three questions:

Does this AI work? (Accuracy, completeness, reasoning quality)

Does it work for everyone? (Performance across conditions, demographics, edge cases)

Does it keep working? (Detecting degradation before it causes problems)

Without evaluations, you're deploying blind. You won't know when performance degrades, when bias emerges, or when AI starts generating clinically inappropriate content.

For product builders:

Evaluations are how you prove to hospitals that your AI meets clinical standards. Hospital procurement committees will ask for evaluation data on real EHR data, clinical expert review results, and bias audits. Without them, you won't make it past the technical review.

For hospital buyers:

Evaluations are how you verify vendor claims before putting AI into production. Vendor benchmarks scores tell you nothing about performance on your patient population with your workflows. Demand evaluation on your data before signing contracts.

Why Healthcare AI Needs Continuous Evaluation

AI models degrade over time. What worked last quarter might fail this quarter because:

Without continuous evaluation, you discover these problems when:

Physicians reject AI documentation repeatedly (trust collapses)

Payer denials spike (revenue impact)

Clinical staff report inappropriate recommendations (safety risk)

Regulators audit and find issues (compliance violation)

With continuous evaluation, you discover problems before they reach patients.

Why Benchmarks Don't Tell You What You Need to Know

Benchmarks are standardized tests used to evaluate AI performance.

In healthcare, these include medical licensing exams (USMLE), question-answering datasets (MedQA, PubMedQA), and clinical reasoning tests. They provide a consistent way to compare different AI models on the same tasks. But benchmark performance doesn't predict production performance. GPT-4 passed medical licensing exams with near-perfect scores. When tested on real physician queries, it achieved 65% accuracy.

The gap between test performance and clinical reality:

A prior authorization agent that passes all benchmarks can still fail in production because benchmarks don't test system integration, error recovery, human coordination, or audit trail compliance.

Layer 1: Automated Evaluations (Run on Every Output)

Accuracy checks

Does AI-generated content match source data?

Example: AI says "Patient's HbA1c is 8.2%." Automated check verifies this against actual lab value in EHR. Mismatch triggers alert.

Completeness checks

Are required elements present?

Example: Prior authorization requires clinical indication, relevant history, medical necessity. Check confirms all sections populated.

Consistency checks

Do similar cases produce similar outputs?

Example: Two patients with identical diabetes profiles should get similar prior auth documentation. Large variations indicate problem.

Policy compliance

Does output meet current requirements?

Example: Documentation cites payer criteria version from Q3 2024, not outdated Q1 2023 version.

Run these on 100% of outputs. Flag failures immediately.

Layer 2: Clinical Expert Review (Sample-Based)

Automated checks catch technical errors. They don't catch clinical inappropriateness.

What physicians review:

Is the clinical reasoning sound?

Would I approve this if it came from a colleague?

Are there safety concerns I'd catch that AI missed?

Is the documentation quality acceptable for the medical record?

Sample size: 5-10% of outputs, randomly selected. Higher for new AI deployments, lower for mature systems.

Frequency: Weekly for high-volume workflows. Monthly for lower-volume.

Scoring rubric:

Clinical accuracy: Facts correct? (Yes/No)

Clinical appropriateness: Reasoning sound? (1-5 scale)

Documentation quality: Meets standards? (1-5 scale)

Safety concerns: Any red flags? (Yes/No with explanation)

Action thresholds:

Any safety concern → Immediate investigation

Appropriateness score <4 → Review that case category

Quality score trending down → Retrain or update AI

Layer 3: Operational Metrics (Measure Real-World Impact)

Approval rates

How often do staff approve AI output without edits?

Target: >90% for mature systems

Low rate indicates quality problems or inappropriate content

Edit patterns

What do staff consistently change?

If 40% of edits remove the same type of information, that's a systematic problem to fix.

Payer approval rates

For prior authorizations, how often do payers approve?

Compare to baseline. If AI-assisted authorizations have lower approval rates, something's wrong.

Time savings

Are workflows actually faster?

Measure end-to-end time: AI execution + staff review. Compare to baseline manual process.

Staff satisfaction

Do staff find AI helpful or burdensome?

Survey monthly. Track trends. Declining satisfaction predicts adoption failure.

Three frameworks set the standard for healthcare AI evaluation. Hospitals reference them during procurement. Regulators expect you to know them.

What it is:

121 real-world medical tasks, 31 datasets (mostly real EHR data), clinician-validated taxonomy organizing medical AI into five categories: clinical decision support, clinical documentation, patient communication, medical research, administrative workflows.

Why it matters:

First evaluation framework grounded in actual clinical workflows, not researcher convenience. Model performance on synthetic benchmarks sometimes reverses on real EHR data. Artificial tasks mislead.

Key results:

When Stanford evaluated frontier LLMs, performance varied dramatically across task categories. Models excelling in clinical decision support might underperform on patient communication.

When to reference it:

During product development to ensure you're testing on realistic tasks. During hospital procurement to show you've evaluated against clinical standards.

What it is:

5,000 multi-turn conversations simulating real interactions, evaluated by physician-written rubrics. 48,562 rubric criteria across five dimensions. 262 physician validators across 26 specialties and 60 countries.

Why it matters:

Evaluates realistic clinical conversations using multidimensional rubrics. Single-score benchmarks hide distinct aspects of clinical quality (accuracy vs. communication vs. context awareness). Same model might excel at emergency triage but struggle with patient education.

Key results:

Automated grading using GPT-4 achieved 0.71 agreement with human physicians, comparable to inter-physician agreement. LLM-based evaluation can be reliable when properly designed.

When to reference it:

When evaluating conversational AI or patient-facing systems. Use the five-dimensional framework (accuracy, completeness, context awareness, communication, instruction-following) for your own evaluation rubrics.

What it is:

Open-source evaluation framework integrated with Azure AI Foundry. Lets organizations assess AI on their own data with their own success criteria.

Why it matters:

Generic benchmarks inform research. Healthcare organizations care about performance on their specific patient population with their specific workflows. This provides the infrastructure for that.

Key results:

Evaluation should be embedded in the agent lifecycle: pre-deployment validation, continuous monitoring, regression testing, compliance documentation. Not a one-time checkpoint.

When to reference it:

When building evaluation infrastructure for your product or organization. Reference architecture for production systems.

Different healthcare AI applications need different evaluation approaches.

02.

Clinical Documentation

Primary metrics:

Clinician satisfaction: >90%

Hallucination rate: <5%

Incomplete documentation: <2%

Edit time: Minimal (should save time, not add burden)

Evaluation approach:

Clinician review: Physicians rate quality and identify errors

Hallucination testing: Systematically test for fabricated findings

Completeness checks: Ensure all required documentation elements present

Bias evaluation: Verify completeness across demographics and conditions

Red flags:

Physicians spending more time editing than writing from scratch

Repeated hallucinations of clinical facts not in source data

Documentation quality varies by patient insurance type or demographics

03.

Patient Communication and Education

Primary metrics:

Completion rate: >80%

Patient satisfaction: >4/5

Visit quality improvement: Measurable impact

Health literacy adaptation: Explanations match patient education level

Evaluation approach:

Patient feedback: Clarity, relevance, helpfulness

Engagement tracking: Completion rates for pre-visit tasks

Clinical impact: Whether patient preparation improves visit efficiency

Language accessibility: Multilingual capability and cultural appropriateness

Red flags:

High completion but low comprehension (completing without understanding)

Explanations too technical for patient population

Cultural inappropriateness or translation errors

04.

Clinical Decision Support

Primary metrics:

Concordance with specialists: >85%

Sensitivity for dangerous conditions: >95%

False positive rate: Low (avoid alarm fatigue)

Recommendation interpretability: Clinicians can understand reasoning

Evaluation approach:

Prospective validation: Test on cases clinicians haven't seen (avoid bias)

Rare condition testing: Ensure reasonable behavior on atypical presentations

Outcome tracking: Link recommendations to patient outcomes over time

Explainability assessment: Verify clinicians can understand reasoning

Red flags:

High average accuracy but poor performance on rare conditions

Recommendations that clinicians can't explain to patients

Systematic underperformance on specific demographics

Mistake 1: Only Testing at Launch

You test rigorously during development. Deploy. Stop evaluating.

Result: Model degrades silently. Nobody notices until physicians complain.

Fix: Continuous evaluation. Automated checks on 100%. Expert review on samples. Weekly reports.

Mistake 2: Using Wrong Baseline

You measure "AI achieves 85% accuracy!" But you don't know if humans achieve 90% or 70%.

Result: You can't tell if AI is helping or hurting.

Fix: Measure baseline human performance first. Then compare AI to baseline. Improvement matters, not absolute numbers.

Mistake 3: Ignoring Edge Cases

Staff expected to review all cases, even those requiring clinical expertise beyond their scope.

Result: Staff approves inappropriate documentation because they don't know better, or everything goes to physician, defeating purpose of AI.

Fix: Stratify performance by case complexity, patient demographics, clinical scenarios. Ensure performance is acceptable across ALL groups.

Mistake 4: No Action Thresholds

You collect metrics. You have dashboards. You don't define "what triggers investigation?"

Result: Metrics drift. Nobody responds. Evaluation becomes theater.

Fix: Set thresholds. Accuracy <95%? Investigate. Approval rate <90%? Review outputs. Safety violation? Immediate escalation. Make metrics actionable.

Mistake 5: Evaluating AI in Isolation

You test AI accuracy on synthetic data in controlled environment.

Result: AI performs well in lab. Fails in production when integrated with real EHRs, real payer portals, real workflows.

Fix: Evaluate in production environment. Real data. Real integrations. Real time constraints. Real human reviewers.